Parkinson’s disease is a neurodegenerative disease affecting the motor system. It’s characterised by several symptoms, with the one most people connect to Parkinson’s being a persistent awake resting tremor that disappears with voluntary movement and sleep. Other symptoms include increased rigidity, slow movements (bradykinesia) and postural instability. There are also often substantial cognitive impairments as the disease progresses. The symptoms appear to be caused by the death of cells in the basal ganglia that produce the neurotransmitter dopamine. The reason for this cell death is still not understood.

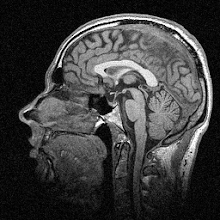

Treatment is available for Parkinson’s, most commonly in the form of L-DOPA, a drug that at first replenishes the amount of dopamine in the system and thus relieves symptoms somewhat. It does have side-effects and becomes less effective over time however, and other drugs are also used to control the symptoms. Relatively recently, deep brain stimulation (DBS) has come to the fore as an effective treatment, especially when drugs aren’t working. The idea is that an electrode is inserted deep into the brain and areas of the basal ganglia are stimulated with electrical impulses to regulate their output, reducing the symptoms.

Because DBS is still quite new, we don’t really know what its long-term effects are. Short-term the effects are spectacular; see this video of a patient with and without his DBS system switched on. It’s quite dramatic (he turns it off at about 1:25):

But what about in the medium to long term? In the paper I discuss today, the researchers followed up eight patients after five years of DBS to see whether there was any effect on either their clinical symptoms or measures of motor performance. Over these five years the patients received continuous DBS and also a drug regimen, adjusted to control their symptoms as required. Symptoms were measured using the Unified Parkinson’s Disease Rating Scale (UPDRS) and the motor kinematics measured were ankle movement speed and strength. Prior to testing, patients stopped taking drugs and turned off their stimulators for 12 hours overnight.

The experimenters tested the patients both with DBS turned on and off at the start of the experiment (year 0) and again five years later (year 5). Their main findings were that, as expected, DBS reduced symptoms and improved movement speed and strength overall – both at year 0 and year 5. When comparing the two time periods however, they found an interesting result. UPDRS scores increased over five years, i.e. symptoms got worse, but the speed and strength of the ankle movement actually improved. So it looks like DBS gave no long-term improvement on the UPDRS scores but did produce an improvement in mobility and strength.

How can this apparent contradiction be explained? Well, first it’s quite difficult to say what would have happened without DBS over five years, as there was no adequate control group in this study. As Parkinsonism is a degenerative disease, the UPDRS scores would most certainly have got worse over five years anyway. But being able to say for sure whether the DBS reduced this worsening of symptoms is very hard to say. The researchers have a go at saying why this measure didn’t improve versus the other motor measures improving though: the UPDRS measurement involves repetitive movements like finger tapping, which the basal ganglia are heavily involved in; whereas the ankle movements tested for strength and speed are discrete movements that don’t really need coordinated muscle output over time – so they aren’t as regulated by the basal ganglia and therefore aren’t as affected by Parkinson’s.

There’s also the possibility that DBS increases dopamine production (aside from just regulating the output of the basal ganglia) and that this actually increases motivation and “energise” action, which is known to improve muscle strength. Also, if DBS improves quality of life and makes patients more active, their muscle strength and speed will change purely as a result of using their muscles more.

So there’s quite a lot here for a relatively short paper. The most interesting point from a basic science perspective I think is the contention that the basal ganglia don’t really have much to do with large discrete movements, which is why the symptom scores get worse (as they’re based on repetitive movements). It’s certainly plausible, though I’d be wary of reading too much into it.

From a clinical point of view though I guess the most interesting finding is that sustained DBS does improve motor outcomes over the medium term. But a weakness of this work is that without, as I say, an adequate non-stimulated control group, it’s very difficult to say whether it has any effect on the UPDRS scores differential to if the patients were not stimulated. Of course, there are ethical issues with not giving people the best treatment currently available just so you can test how they compare to people who are.

---

Sturman MM, Vaillancourt DE, Verhagen Metman L, Bakay RA, & Corcos DM (2010). Effects of five years of chronic STN stimulation on muscle strength and movement speed. Experimental Brain Research, 205 (4), 435-43 PMID: 20697699