For example, what happens if the information from two senses give differing results? How do you adapt and calibrate your senses so that the information you get from one (say, the visual slant of a surface) matches up with the other (the feeling of the surface slant)? In this paper, the investigators set out to answer this question by examining something called the visual dominance hypothesis.

The basic idea is that since we are so over-reliant on vision, it will take priority whenever something else conflicts with it. That is, if you get visual information alongside tactile (touch) information, you will tend to adapt your tactile sense rather than your vision to make the two match up. But here the authors present data and argue in favour of a different hypothesis: reliability-based adaptation, where the sensory modality with the lowest reliability will adapt the most. Thus in low-visibility situations, you become more reliant on touch, and vice-versa.

Two experiments are described in this paper: a cue-combination experiment and a cue-calibration experiment. The combination experiment measured the reliability of the sensory estimators, i.e. vision and touch. The calibration experiment was designed using the estimates from the combination study to test whether the visual dominance or reliability hypotheses best explained how the sensory system adapts.

In the combination experiment, participants had to reach out and touch a virtual slanting block in front of them, and then say whether they thought it was slanted towards or away from them. They received either visual or haptic feedback or both (i.e. they could see or touch the object, or both). The cool thing about the setup is that the amount of visual reliability could be varied independently of the amount of haptic reliability, which enabled the experimenters to find a decent visual-haptic reliability ratio for each participant for use in the calibration experiment. They settled on parameters that set the reliability of visual-haptic at 3:1 and 1:3, so either vision was three times as reliable as touch or the other way round.

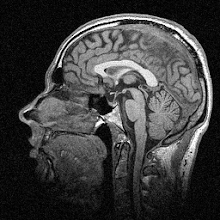

Following this they tested their participants in the calibration study, which involved changing the discrepancy between the visual and haptic slants over a series of trials, using either high (3:1) or low (1:3) visual reliability. You can see the results in the figure below (Figure 4A in the paper):

Reliability-based vs. visual-dominance hypothesis

The magenta circles show the adaptation in the 3:1 case, while the purple squares show adaptation in the 1:3 case. The magenta and purple dotted lines show the prediction given in the reliability-based adaptation hypothesis (i.e. that the least reliable estimator will adapt), while the black dotted line shows the prediction given in the visual dominance hypothesis (i.e. that vision will never adapt). It's a nice demonstration that seems to show robust support for reliability-based adaptation and that the visual dominance hypothesis isn't supported by the data.

For me, it's actually not too surprising to read this result. There have been several papers that have showed reliability-based adaptation in vision and in other modalities, but the authors do a successful job in showing why their paper is different: partly because purely sensory responses are used to avoid contamination with motor adaptation, and partly because this is the first time that reliabilities have been explicitly measured and used to investigate sensory recalibration.

One thing I wonder about though is the variability in the graph above. For the 3:1 ratio (high visual reliability) the variability of responses is much lower than for the 1:3 ratio (low visual reliability). Since the entire point of the combination experiment was to determine the relative reliabilities of the different modalities for the calibration experiment, I would have expected the variability to be the same in both cases. As it is it looks a bit like vision is inherently more reliable than touch, even when the differences in reliability are supposedly taken into account. Maybe I'm wrong about this though, in which case I'd appreciate someone putting me right!

The authors also model the recalibration process but I'm not going to go into that in detail; suffice it to say that they found the reliability-based prediction is very good indeed as long as the estimators don't drift too much with respect to the measurement noise (i.e. the reliability of the estimator). If the drift is very large, the prediction tends to follow the drift instead of the reliability. I think a nice empirical follow-up would be to do a similar study that takes drift into account - proprioceptive drift is a well-known phenomenon that occurs, for example, when you don't move your hand for a while and your perception of the location of it thus 'drifts' over time.

Anyway, generally speaking this is a cool paper and I quite enjoyed reading it. That's my first of three posts this week - I'll have another one up in a day or two. I know this one was a bit long, and I'll try to make subsequent posts a bit shorter! Questions, comments etc. are very welcome, especially on topics like readability. I want this blog to look at the science in depth but also to be fairly accessible to the interested lay audience. That way I can improve my writing and communication skills while also keeping up with the literature. Win-win.

---

Burge, J., Girshick, A., & Banks, M. (2010). Visual-Haptic Adaptation Is Determined by Relative Reliability Journal of Neuroscience, 30 (22), 7714-7721 DOI: 10.1523/JNEUROSCI.6427-09.2010

Image copyright © 2010 by the Society for Neuroscience

Guinness is good for you.

ReplyDeleteSome is a bit too technical for me, but I got the gist of things, so that's *very* important. And I think it needs the more technical parts for those who comprehend more about the subject than I do.

ReplyDeleteThanks, Carl!

You're welcome Puck - I'm trying to make it accessible without losing the essence of what makes it interesting to scientists, which is a fine line to walk! The next one will be shorter too, and the topic is a bit more fun, which should help.

ReplyDeleteJames: please no spam. Even joke spam. :)