It’s no good sending a complex set of commands to reach for an object if you don’t know how to relate where your hand is right now to where the object is. There are several theories as to how the brain might perform this task. In one theory, the object’s location on the retina is translated into body-centred coordinates (i.e. where it is in location to the body centre) by adding the eye position and the head position sequentially. In another, the object is stored in a gaze-centred reference frame that has to be recalculated after every movement.

There’s already some evidence for the second account – we tend to overestimate how far in our peripheral vision a target sits, and so we actually make pointing errors when asked to reach to where we thought it was. So it seems as if we dynamically update our estimate of where a target is when we are asked to make active movements towards them. In this paper the researchers were interested in whether this was also true for perceptual estimates. That is, when you are simply asked to state the position of a remembered target, does that also depend on gaze shift?

To answer this question, the authors performed an experiment with two different kinds of targets: visual and proprioceptive. (If you’ve been paying attention, you’ll know that proprioception is the sense of where your body is in space.) The visual target was just an LED set out in front of the participant; the proprioceptive target was the participant’s own unseen hand moved through space by a robot. Before the target appeared, participants were asked to look at an LED either straight in front of them, or 15˚ to the left or right. The targets would then appear (or the hand would be moved to the target location), disappear (or the hand would be moved back), and then the participant’s hand would be moved out again to a comparison location. They then had to judge whether their current hand location was to the left or right of the remembered target.

Here’s where it gets interesting. Participants were placed into one of two conditions: static or dynamic. In the static condition, participants kept their gaze fixed on an LED to the left, to the right or straight ahead of their body midline. In the dynamic condition, they gazed straight ahead and were asked to move their eyes to the left or right LED after the target had disappeared. In a gaze-dependent system, this should introduce errors as the target location relative to the hand would be updated relative to gaze after the eye movement. In a gaze-independent system, no errors should be evident as the target position was already calculated before the eye movement.

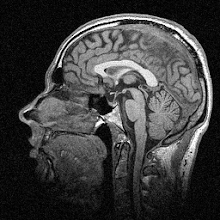

Bias in judgements of visual and proprioceptive targets

The figure above (Figure 4a and 4b in the paper) shows the basic results. Grey is the right fixation while black is the left fixation; circles show the static condition while crosses show the dynamic condition. You can immediately see that in both conditions, for both targets, participants made estimation errors in the opposite direction to their gaze: errors to the left for right gaze, and errors to the right for left gaze. So it does look like perceptual judgements are coded and updated in a gaze-centred reference frame. To hammer home their point, the next figure (Figure 5 in the paper) shows the similarity between the judgements in the static and dynamic conditions:

Static vs. dynamic bias

As you can see, the individual judgements match up very closely indeed, which gives even more weight to the gaze-centred account.

So what does this mean? Well: it means that whenever you move your eyes, whether you are planning an action or not, your brain’s estimation of where objects are in space relative to your limbs is remapped. The reason that the errors this generates don’t affect your everyday life is that usually when you want to reach for an object you will look directly at it anyway, which eliminates the problems of estimating the position of objects on the periphery of your vision.

I enjoyed reading this paper – and there is much more in there about how the findings relate to other work in the literature – but it was a bit wordy and hard to get through at times. One of the most difficult things about writing, I’ve found, is to try and maintain the balance between being concise and containing enough information so that the result isn’t distorted. Time will tell how I manage that on this blog!

---

Fiehler, K., Rösler, F., & Henriques, D. (2010). Interaction between gaze and visual and proprioceptive position judgements Experimental Brain Research, 203 (3), 485-498 DOI: 10.1007/s00221-010-2251-1

Images copyright © 2010 Springer-Verlag

This maps (no pun intended :) nicely onto Wilkie and Wann's model of active gaze and steering control: people steer by looking at where they intend to go. It sounds a little trivial, but actually it solves a lot of problems for accessing the required information.

ReplyDeleteSounds interesting, I'll have to check that out! Thanks.

ReplyDeleteFresh off the press: http://www.springerlink.com/content/y1q7w88345tk2473/

ReplyDelete